François Lanusse

Cosmologist / Deep Learning Researcher @ CNRS, member of the CosmoStat Laboratory near Paris.

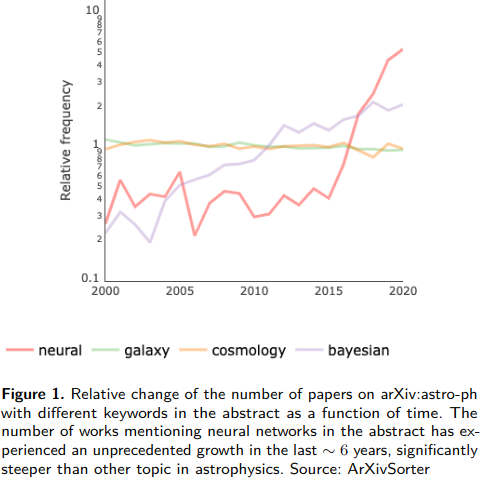

I am a CNRS researcher working at the intersection between Deep Learning, Statistical Modeling, and Observational Cosmology. I am particularly interested in combining tools and methodologies from the field of Machine Learning (automatic differentiation, generative AI) with physical modeling for the analysis of Cosmological Surveys.

I am an active member of both the LSST Dark Energy Science Collaboration (LSST DESC), and the LSST Informatics & Statistics Science Collaboration (LSST ISSC).

Between 2023-2024, I was an Associate Research Scientist at the Flatiron Institute in New York City, where I was part of the inaugural Polymathic AI research group.

Before my CNRS position, I was a postdoctoral fellow at the Berkeley Center for Cosmological Physics (BCCP) and with the Foundation of Data Analysis (FODA) institute at UC Berkeley, working with Prof. Uroš Seljak. Before that, I was a postdoctoral researcher in the McWilliams Center for Cosmology at Carnegie Mellon University, where I was working with Prof. Rachel Mandelbaum on weak gravitational lensing measurements and systematics, and interacted with both Statistics and Machine Learning departments while at CMU.

news

| Dec 12, 2025 | Presented AION-1, our omnimodal foundation model for astronomical sciences, at the NeurIPS 2025 main conference in San Diego! |

|---|---|

| Dec 7, 2025 | Our joint CosmoStat and Ciela team won second place at the FAIR Universe – Weak Lensing Uncertainty Challenge at NeurIPS 2025! |

| Oct 10, 2025 | Great haul with the Polymathic AI team at NeurIPS 2025: 7 papers |

| Dec 1, 2024 | Released the first version of the Multimodal Universe dataset, the first “web scale” dataset of astronomical data for foundation model training. |

| Sep 25, 2023 | Starting a one year position at the Flatiron Institute! I will be based in New York City until Sept. 2024. |

| Jul 29, 2023 | Check out all the great submissions that were accepted at the 2023 ICML ML4Astro Workshop in Hawai’i |

| May 22, 2023 | Great three days of machine learning and astrophysics at CCA for the Cosmic Connections Symposium. All recordings are here. |

| Feb 13, 2023 | jax-cosmo paper is out on the arxiv |

| Jan 30, 2023 | Open PhD position at CEA Saclay to work with me on using generative modeling and autodiff to measure cosmic shear from space 🛰️. Application deadline March 10th. |

| Dec 12, 2022 | Very cool project |

| Nov 26, 2022 | Pre-release of jaxDecomp, a JAX library providing bindings to NVIDIA’s cuDecomp adaptive pencil decomposition library for efficient multi-node/multi-gpu 3D FFTs and halo exchanges. |

| Nov 15, 2022 | Multiple papers accepted in the Machine Learning and the Physical Sciences Workshop at NeurIPS 2022 |

| Nov 7, 2022 | New astro paper on Differentiable Halo Occupation Distributions led by Ben Horowitz demonstrating that it’s not because your model is stochastic and involves discrete variables that you can’t backprop through it |